DEAD

High-quality video dubbing from seconds of training data using a multi-person neural rendering prior. BMVC 2025.

Authors: Jack Saunders, Vinay P. Namboodiri — University of Bath

Venue: British Machine Vision Conference (BMVC) 2025

Abstract

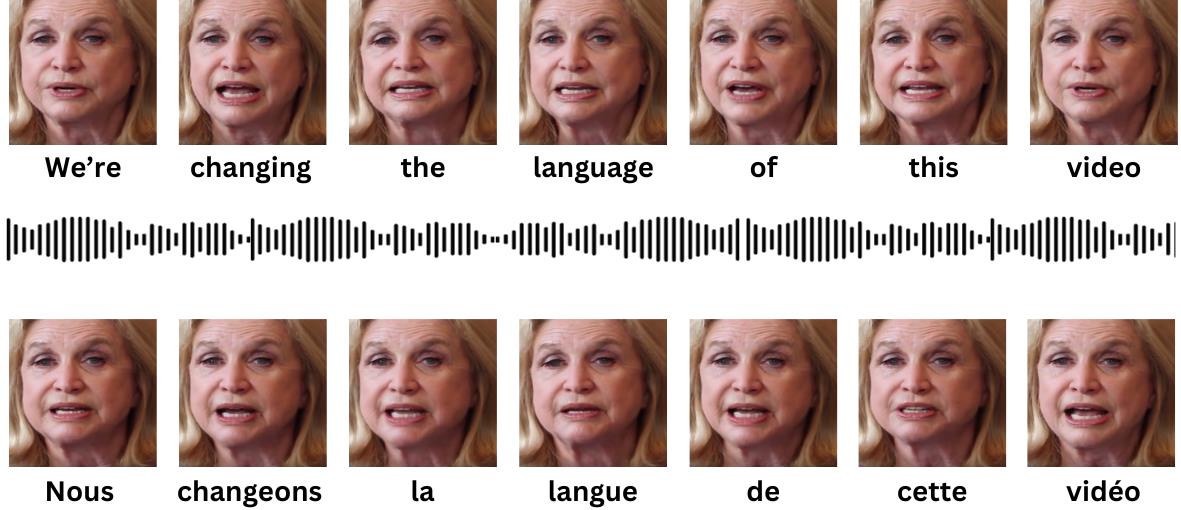

Visual dubbing is the process of generating lip motions of an actor in a video to synchronise with given audio, allowing video-based media to reach global audiences. Existing person-specific models see only one frame of the actor and therefore lack the ability to capture identity in the form of characteristic motion and idiosyncrasies, or they require large amounts of training data and costly model training.

Our key insight is to train a large, multi-person prior network, which can then be rapidly adapted to new users with just a few seconds of data. This enables high-quality visual dubbing for any actor — from A-list celebrities to background extras. We demonstrate state-of-the-art visual quality and recognisability both quantitatively and qualitatively through two user studies, and show that our prior learning and adaptation method outperforms baselines under limited data conditions.

Results

Video

Citation

@inproceedings{Saunders2025DEAD,

author = {Saunders, Jack and Namboodiri, Vinay P.},

title = {DEAD: Data-Efficient Audiovisual Dubbing using Neural Rendering Priors},

booktitle = {Proceedings of the British Machine Vision Conference (BMVC)},

year = {2025},

}