TalkLoRA

Personalised speech-driven facial animation using LoRA adaptors. Efficient transformer inference via chunking. BMVC 2024.

Authors: Jack Saunders, Vinay P. Namboodiri — University of Bath

Venue: British Machine Vision Conference (BMVC) 2024

Abstract

Transformer-based speech-driven facial animation models suffer from two key limitations: they are difficult to adapt to new personalised speaking styles, and they are computationally inefficient for long sentences due to the quadratic complexity of attention.

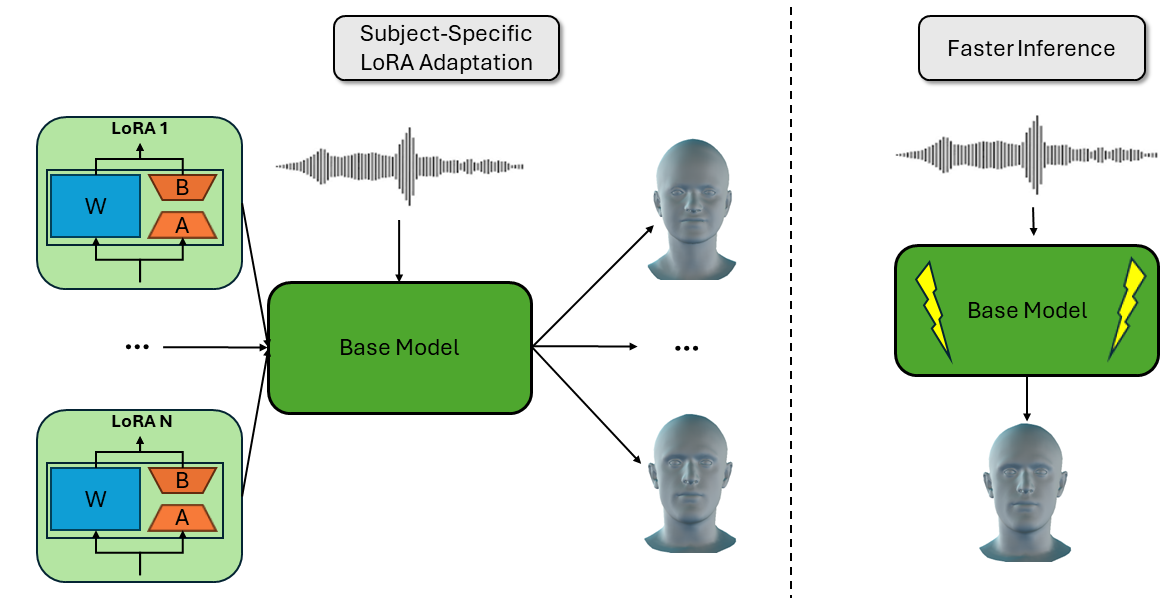

TalkLoRA addresses both. We apply Low-Rank Adaptation (LoRA) to learn small, subject-specific parameter adaptors that capture individual speaking styles with minimal training data. We additionally introduce a chunking strategy that processes audio in overlapping windows, reducing transformer complexity by an order of magnitude without sacrificing quality.

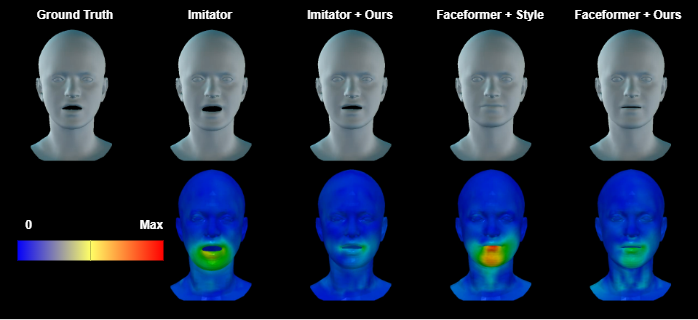

TalkLoRA achieves state-of-the-art style adaptation and provides practical guidance on LoRA hyperparameter selection for speech-driven animation.

Results

Citation

@inproceedings{Saunders2024TalkLoRA,

author = {Saunders, Jack and Namboodiri, Vinay P.},

title = {TalkLoRA: Low-Rank Adaptation for Speech-Driven Animation},

booktitle = {Proceedings of the British Machine Vision Conference (BMVC)},

year = {2024},

}